Analytics should drive decisions, not describe them

We were burning thirty-plus hours of our time every single week at Cars24.

That is 120 hours a month spent on “what happened.” We would sit in a room for an hour and the first 45 minutes would always go to deep-dive cuts an analyst had to pull on the spot, numbers nobody had lined up before the meeting. By the time we actually got to a decision, the meeting was over and the opportunity was gone. We were not being analytical. We were just being slow.

The fix was not a better dashboard. It was removing the human from the reporting loop entirely.

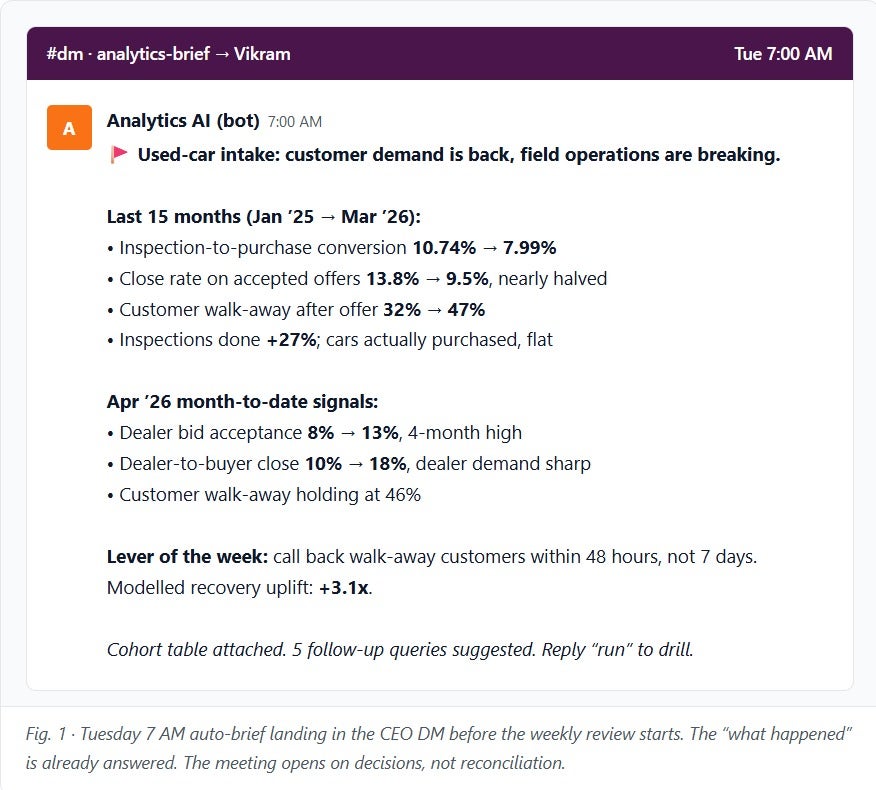

We have now deployed an AI agent that handles the entire pre-meeting analysis. It pulls the data, identifies the levers that moved, and flags the single most important fix, all before the team even sits down. We have effectively recovered those thirty hours. Now, we do not spend our time explaining the past. We spend it deciding the future.

This is what an AI-first organization actually looks like. It is not a deck or a pilot. It is a production-ready system that has fundamentally changed our speed of execution.

And it is not just about the time we have saved. It is about the depth. Our AI agent is surfacing insights with five times the granularity of a human analyst, identifying growth levers that used to be buried in the noise. It is the difference between seeing a “trend” and seeing the exact friction point that is costing us volume.

None of this happened by accident

The auto-brief in my DM is the output. The input was twelve weeks of rebuilding the layer underneath it.

Three months ago I told the analytics team to stop building dashboards. Not pause. Not reprioritize. Stop. For twelve weeks. No new views, no new KPIs, no new vanity reports. The only instruction was to rewrite the reporting layer underneath everything. Clean the measures. Kill the duplicates. Fix the joins. Name owners. Produce nothing that could be demoed in a leadership review.

This week that call paid off. And the lesson from it is not about data. It is about what a leader is actually paid to do.

What a CEO pays for with this kind of bet

Every leadership team in every company I have run has the same instinct. When something is not working, ask for another dashboard. Add another chart. Slice the number another way. It feels productive. It is almost always a substitute for doing the harder thing.

The harder thing is admitting that the layer underneath the dashboards is broken, and that the answer is not a new view but a three-month rebuild of the plumbing.

Every leader asking for another dashboard is doing the reasonable thing for their week. It is the job of the CEO to do the unreasonable thing for the year. To say no to twenty reasonable requests so that one unreasonable investment can actually land.

I said no a lot in the last twelve weeks. To regional heads asking for market-level conversion views. To product leaders asking for funnel reports by variant. To my own board deck, which I rebuilt twice with older numbers because the new ones were not ready yet. Each of those nos felt expensive at the time. None of them was.

What the reporting layer actually is (and why the old one was broken)

Fast context for readers who have never touched a BI stack.

Every dashboard, every weekly review, every board-deck chart at Cars24 pulls its numbers from a single set of back-end datasets called the “semantic layer.” It is the thing that sits between the raw database and anything a leader looks at. Every number on a conversion slide, every regional cut in a Monday review, every auction-health chart starts life here.

When the layer is clean, the same question asked by the CEO, a regional head, and a field-ops lead returns the same number. When the layer is not clean, it does not.

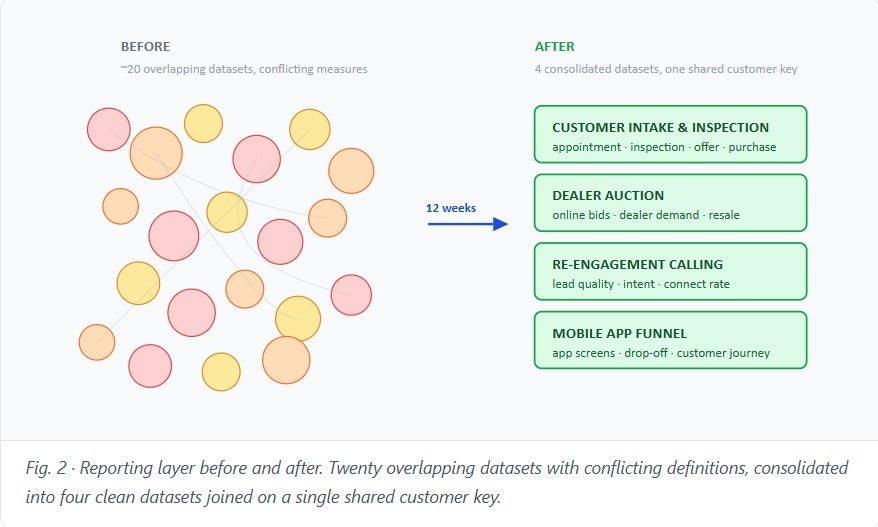

Ours was not clean.

The old workspace had roughly twenty overlapping datasets for a single business line. The same measure, something as basic as how many inspections become actual purchases, existed in four different places with three different definitions. Table joins were hard-coded inside the query logic instead of modelled properly. Refresh timing was inconsistent. Ownership was nominally shared across the team, which in practice meant nobody was on the hook for a stale number. When a leader flagged a broken chart on Monday morning, the cost of tracing it back to the root cause was a week. Sometimes two.

Twelve weeks of cleanup compressed that into four consolidated datasets, one technical owner per dataset, a named business stakeholder, a single shared customer-record key joining all four, and a documented refresh schedule. Every measure was either rewritten or killed. The ones that survived are the ones with a clear business owner who can defend the definition.

This is the plumbing. None of it is glamorous. All of it is the reason the Monday answer is now four seconds.

The bet underneath the bet

The other leadership call I made three months ago was on Aakash Kathuria. He was three weeks into the Head of Analytics role when I asked him to do this. A new leader’s first big project is almost always a product ship, because that is what builds political capital. I asked Aakash to do the opposite. Go dark. Rebuild the plumbing. Produce nothing for eight weeks. Defend the team from every leader who asks why there is no output.

That is a hard ask for a new hire. It exposes them on the first bet they make. If the cleanup failed, the org would have concluded that the new Head of Analytics could not ship, and he would have spent the next year climbing out of that hole.

I made the bet on Aakash because I have learned something about hiring analytics leaders the hard way. The ones who ship dashboards fast are not the ones who change the organization. The ones who change the organization are the ones who will take a foundational bet in the first month, knowing it looks like nothing for the first eight weeks. The willingness to take that bet is the signal.

What actually happened

The analytics team collapsed a twenty-dataset workspace down to four clean, consolidated ones. Every measure was rewritten or killed. Every join was made explicit. Refresh timing was documented. Owners were named. The details are Aakash’s to tell and he has written his own post about the craft side of it.

The leadership moment, for me, was on Monday this week.

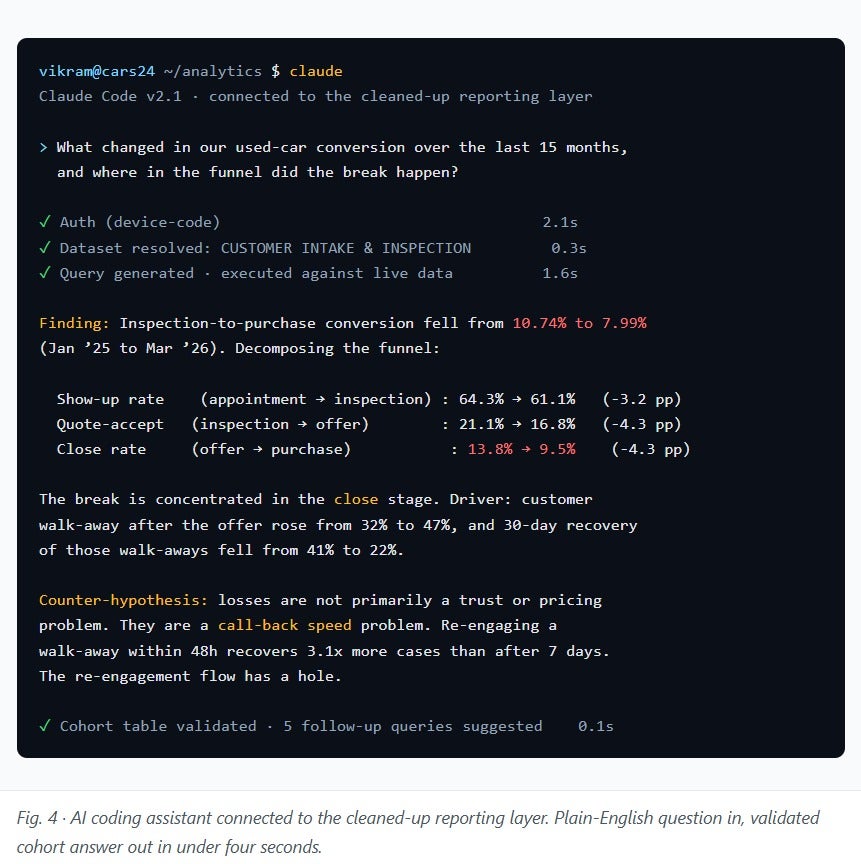

We pointed an AI coding assistant at the cleaned-up layer directly. Thirty seconds of setup. No custom build, no vendor integration, no new tool. And I typed a question in plain English.

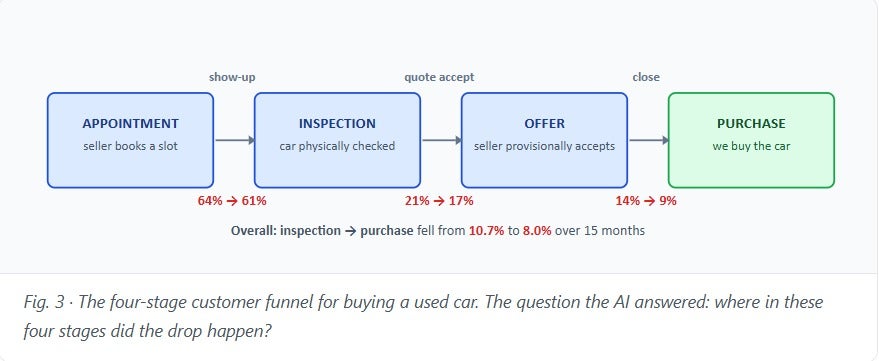

What changed in our used-car conversion over the last fifteen months, and where in the funnel did the break happen?

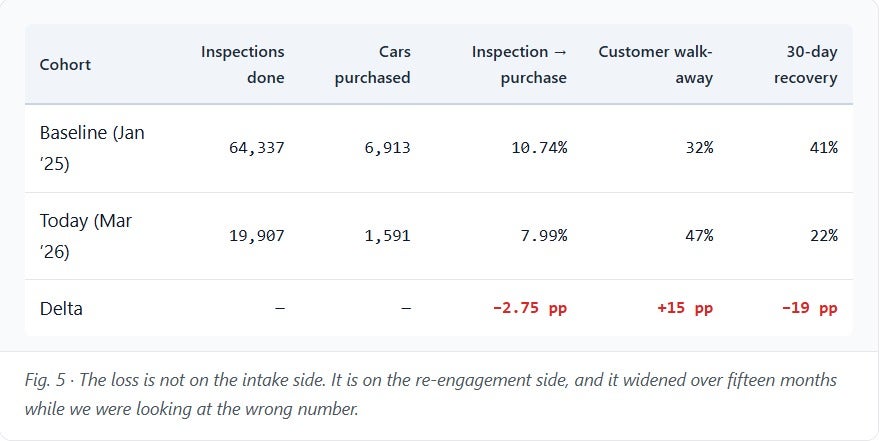

Four seconds later: a real answer. Within the hour we had reframed a two-year-old assumption about our business (“we don’t have enough customers bringing cars in”) into a completely different one (“our re-engagement flow has a hole in it”). The cohort data said losses from customer walk-away were not primarily a trust or pricing problem. They were a call-back speed problem. That is a product fix, not a hiring fix, and the commercial upside is meaningful.

The specific finding matters, and Aakash’s post walks through the numbers. The leadership finding is this: a CEO thesis I had held for two years was wrong, and it had been wrong for all two years, because the underlying data had been too messy to query with confidence. Three months of plumbing was the cost of being able to see it.

What this tells me about leadership

Three things I am taking out of this that apply beyond analytics.

One. The most expensive cost in an organization is the cost of being confidently wrong for a long time. For two years we were confident about something that was wrong. That confidence was not cheap. It shaped hiring, capex, field-ops policy, and the patience of an entire customer-facing function. The amount of org energy spent on the wrong answer probably exceeds the cost of twelve weeks of cleanup by an order of magnitude. Infrastructure investments look expensive in the quarter. They are almost always cheaper than the alternative, which is another year of being wrong.

Two. Leadership is mostly saying no to reasonable requests. The reason this cleanup almost did not happen is that every individual dashboard ask was reasonable. None of them was wrong on its own. But twenty reasonable yeses add up to a team that never gets to fix the foundation. A CEO who cannot say no to reasonable asks will eventually run a company with twenty datasets and no answers.

Three. Betting on a new leader is mostly about what you protect them from. I did not write a single line of code in the last twelve weeks. Most of what I did for the cleanup was air cover. Telling leaders to wait. Telling my own board to wait. Telling myself to wait. The cleanup did not need my technical input. It needed my willingness to hold the line while the team did work that did not yet have a headline.

What is next

Three moves in the next forty-five days.

One. The same playbook starts this week on our used-car-selling side of the business. Same four-to-six-week timeline. Same expected outcome. The analytics team has earned the second bet.

Two. We are opening the query layer directly to fifteen business leaders. Not because I want them writing code. Because the cost of asking a question has dropped from two weeks to forty seconds, and the constraint on better decisions in this company is no longer analyst capacity. It is the willingness to ask.

Three. The Tuesday auto-brief that now lands in my DM every morning at seven becomes the single source of truth for leadership reviews. We are killing three standing analytics meetings and replacing them with one conversation triggered by the brief.

Credit

Aakash Kathuria made his first cross-business bet land.

If there is a lesson for leaders

Infrastructure bets look wasteful in the quarter and look obvious in the year. Most leaders know this intellectually and then, when the moment comes, still fund the next dashboard. The call a CEO is actually paid to make is the one that produces nothing for eight weeks and then reframes a two-year assumption in a single hour.

I made one of those calls this quarter. I intend to make more.

Vikram

Loved this article?

Hit the like button

Share this article

Spread the knowledge

More from the world of Cars24

Building Resilient Node.js BFFs at Scale: Hard-Earned Lessons from Production

The difference between a BFF that handles 100rps and one that handles 10,000rps isn’t some magical framework.

When Voice AI Loses Track of Reality

I hope my findings shared here make a meaningful contribution and have a positive impact on how voice AI systems are designed and evaluated.

From Feedback to Visible Change: A Gentle Rhythm for Team Observability

Leaders spend less time firefighting and more time enabling, because patterns in the trend point to structural fixes.