How Cars24 Prices Your Pre-Owned Car

What you're about to read is the story of how we rebuilt the system we use to price cars when we buy them from you. The old approach had worked for a while, but as Cars24 grew, it stopped keeping up. So we took it apart and built something better

Hi, we are Data Science Builders from the Cars24 team.

What started as an ambitious problem quickly turned into a far more intense and complex journey than we initially anticipated. As you read further, you will begin to understand the challenges, trade-offs, and learnings that shaped this journey.

It wasn’t easy but we persevered, evolved, and ultimately built something we are genuinely proud of today.

The Deceptive Difficulty of Pricing a Used Car

Used-car pricing looks easy from the outside. There is a market, there are comparable cars, you fit a model, you produce a price. In practice it is nothing like that.

A new car has an MSRP, and that one number anchors everything. A used car has none of those anchors. Two cars that share a make, model, variant and year can still differ across all of the following:

- Odometer reading and ownership history

- Accident history, paint and panel condition, tyre wear and service records

- The city they are registered in demand for a particular model can shift meaningfully between two neighbouring towns

- Fuel price changes, which ripple through the diesel-petrol mix in a few weeks

- A face-lift announcement from an OEM, which can cut residual values overnight on the outgoing variant

And the seller's perception of what their car is worth, which is the number we have to negotiate against, does not always track these shifts.

Every offer must satisfy four things at once: fair to the seller · attractive enough to win the car · room for the business downstream · consistent with offers on similar cars sitting on the same lot.

The pricing approach we used earlier handled the first version of this problem reasonably well, but it did not scale gracefully as the business grew more complex. That's why we rebuilt it.

The Signals That Forced Us to Rebuild

The earlier system was a mix of rules, gut-feel adjustments, and inputs from sources that did not always talk to each other. It worked when volumes were smaller and the inventory mix was more uniform. As the business scaled, three kinds of cracks started to show.

1. Inconsistency in the offers themselves

Two cars that looked nearly identical on the inspection sheet, sitting in the same city, could walk out with offers that differed by a noticeable amount. Usually this happened because one data signal happened to dominate the calculation on that day while a different signal had dominated an hour earlier. We could see it happening. We could not always explain why, which made debugging frustrating and made the team lose confidence in the numbers.

2. Market lag

A festive-season surge in demand for a popular hatchback would show up in our offers a couple of weeks after the fact. A regulatory change in one state would take longer than it should to flow through to prices there. The system was reactive when what the business needed was to be a step ahead.

3. Uneven business performance

We were missing some acquisitions we should have won, and on others we were paying a little more than we needed to. There was no single bug to fix. The system was doing roughly the right thing on average and the wrong thing often enough at the margin to justify a serious rethink.

The brief we set for ourselves: build a layered system, not a single model. Each layer should own a clear responsibility. The connections between layers should be clean enough that we can update one piece without breaking the others. The whole thing should be easy to monitor, easy to debug, and able to get smarter from real outcomes.

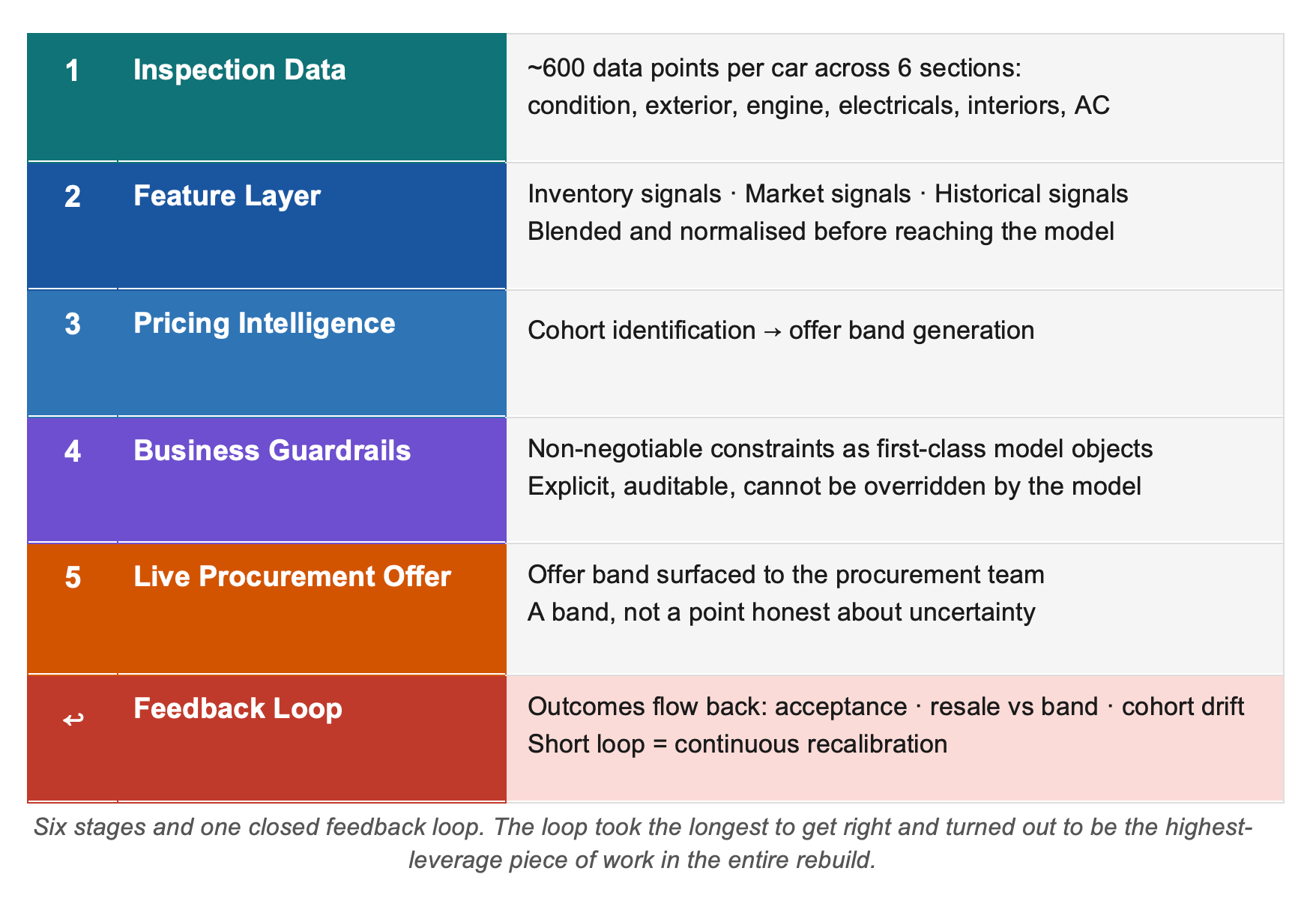

The New Stack at a Glance

The new system works as a pipeline. When a car comes in for inspection, the data from that inspection combined with what we know about the market and how similar cars have behaved in the past flows through a series of layers. Each layer does a specific job.

- The first layer organises and blends the signals.

- The second uses those signals to produce not a single price but a price band, a range within which an offer makes sense given market conditions and what we know about how sellers respond to offers at different levels.

- That band then passes through a set of explicit business guardrails, constraints that the model cannot override.

- What comes out the other end is the live offer.

- And then, critically, the outcomes feed back in.

Did the seller accept? Did the car resell where we expected? Did similar cars in the same group behave the way we predicted? Those answers loop back into the system and sharpen it over time.

The pipeline has six stages and one closed feedback loop. The loop took the longest to get right, and it turned out to be the highest-leverage piece of work in the entire rebuild.

A Closer Look at Each Layer

Data Signals

The pricing system draws on three kinds of information

- From the inspection itself: what the car is, what condition it is in, where it is registered, how far it has been driven.

- From the market: what comparable cars are listed for, what demand looks like in that city, what the competitive landscape is.

- From our own history: how cars in similar groups have behaved, what acceptance rates have looked like, where resale prices have landed relative to what we expected.

These signals are not fed directly into the model in their raw form. They are blended and processed in a feature layer first. This is a deliberate choice. It keeps the downstream layers simpler and easier to update independently when something changes.

Pricing Intelligence

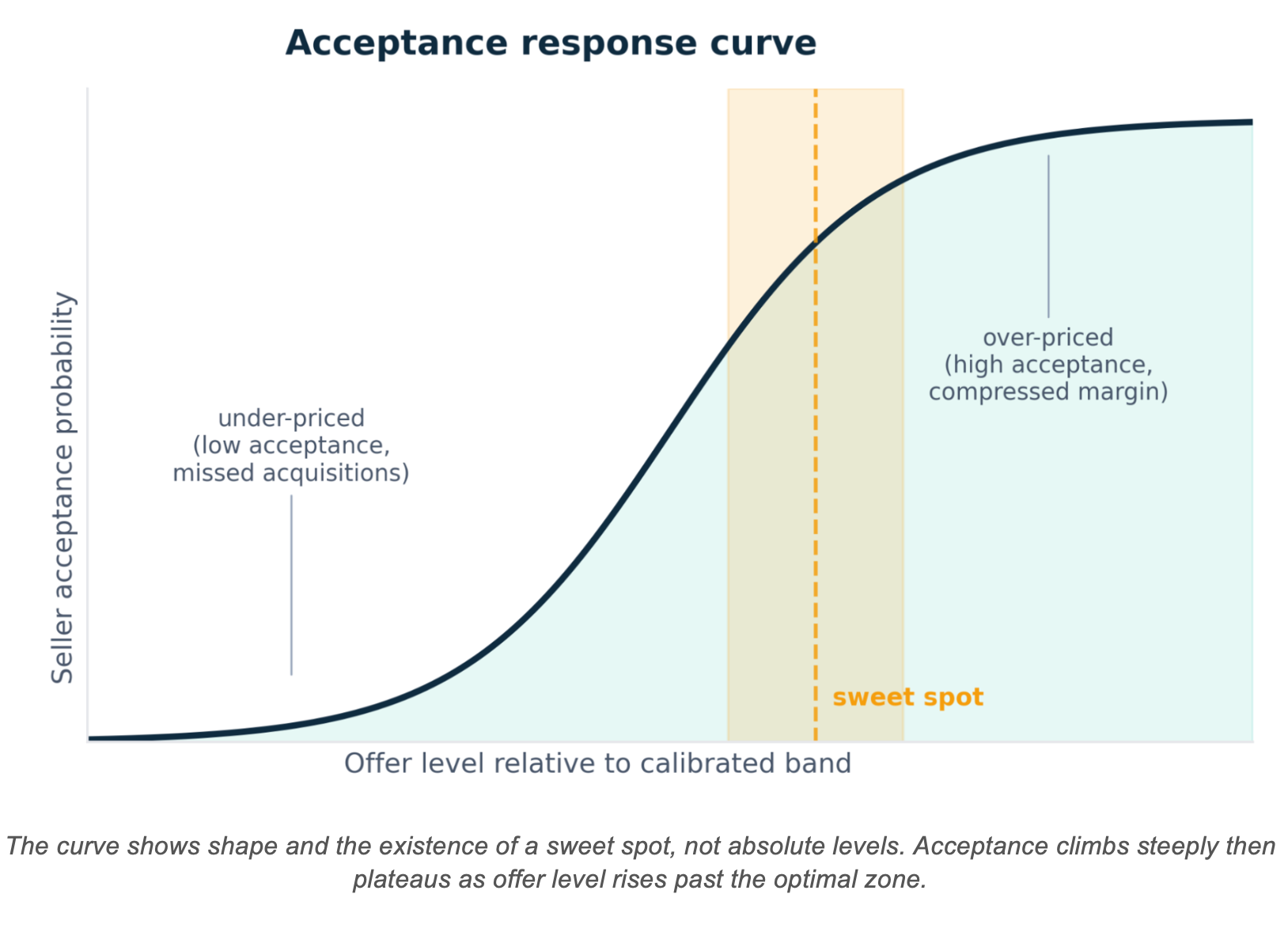

This is where most of the analytical work sits. Rather than producing a single number, this layer reasons about a price band. The band reflects what the market suggests and, importantly, what we know about how sellers actually respond to offers at different levels.

There is a curve to seller behaviour:

- Offer too little and the seller walks away.

- Offer more and acceptance climbs, but at some point you are spending more than you need to.

- The system tries to find the zone where acceptance is high enough to win the car without giving away margin unnecessarily.

One of the most useful choices we made was to group similar cars together before trying to price them. Instead of jumping straight to "what should we offer on this car?", we first ask "which group does this car belong to, and how does that group of cars typically respond to offers?" The pricing question becomes much more tractable once the grouping question is answered.

We resisted the temptation to build one giant model that tried to handle everything. Grouping first, then pricing within each group, turned out to be one of the highest-leverage decisions we made.

Business Guardrails

The recommendation from the pricing intelligence layer passes through a set of guardrails before it becomes a live offer. These are explicit bounds that encode constraints the business cares about and that the model cannot override.

We treat the guardrails as part of the system, not as a cleanup layer tacked on at the end. There are two reasons for this:

- They prevent rare but expensive mistakes on unusual inventory.

- They make the system auditable. Any person on the pricing team can look at any offer and trace why it came out the way it did, because the constraints are written down and visible, not buried inside a model.

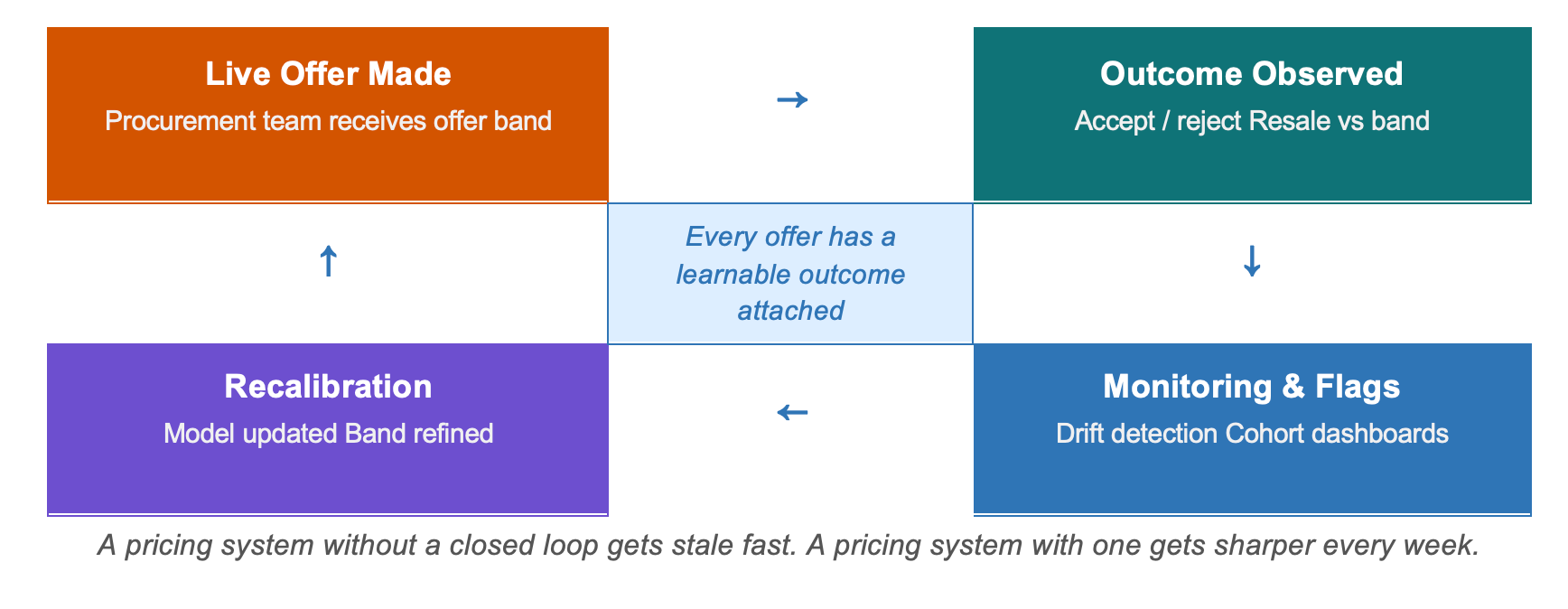

Monitoring and Feedback

The system is a closed loop by design. Every recommendation has a real-world outcome attached to it:

- Did the seller accept?

- Did the car resell at the level the model expected?

- Did similar cars in the same group land where we predicted they would?

Those outcomes feed into dashboards and into the next round of calibration. When the system starts to see drift in a particular group of cars, it flags the problem before the business metrics catch up. That early warning is what a short feedback loop buys you. A pricing system without one ages quickly. A pricing system with one gets sharper every week.

The Models Underneath

The new procurement stack did not start from zero. Cars24 has had a production pricing engine for several years, and the new layer builds on it rather than replacing it.

When a car arrives for inspection, the team captures around six hundred data points across six sections:

- Car details: make, model, year, ownership, odometer and registration status

- Exterior condition and tyre state

- Engine and transmission

- Steering, suspension and brakes

- Electricals and interiors

- Air conditioning

Every part is labelled for the type and severity of any damage found. Photos of all four sides are taken, and so is audio of the engine at idle and under acceleration, because engine condition leaves a distinctive fingerprint in sound.

Two models sit on top of this data.

Profecto

Profecto is the model that estimates what a car is fairly worth in the current market. It has been trained on a large volume of historical pricing data and has been refined over years of production use. Some of the most important signals it uses:

- How far a car's odometer reading deviates from what is typical for that variant at that age a car driven harder than average gets penalised

- Rolling price trends at the make-model level

- Panel-by-panel damage counts

- Severity scores for significant damage

- Condition ratings produced by separate sub-models for each section of the car

When it was first built, several modelling approaches were tested on standard accuracy benchmarks. One approach won, and it has been the workhorse since.

Profundus

Profundus is the module that reads the photos. Raw car photos contain a lot of noise: trees, people, other vehicles, cluttered backgrounds. The pipeline handles this in three stages:

1. First isolates the car from all of that background clutter

2. Then strips the remaining background

3. Then produces a compact numerical summary of what the photos actually show which becomes an additional input to Profecto

Adding visual signals made a real difference on cars where condition is difficult to capture in text and numbers alone, which is exactly where pricing errors tend to hurt the most.

Profecto answers: "what is this car worth?" Profundus tells Profecto what the photos add to that answer. The new procurement layer answers the harder question: given all of that, what should we actually offer for this car, given how this group has been behaving, what acceptance looks like at different price levels, and what the business needs from this acquisition?

The new procurement stack treats these two models as building blocks rather than finished solutions:

- Profecto's fair-value estimate anchors the band logic in the pricing intelligence layer.

- Its underlying signals also feed the grouping layer, so there is no duplicated work downstream.

- A separate model inside the pricing intelligence layer estimates how seller acceptance shifts as the offer level changes.

- An optimisation step then combines the fair-value estimate, the acceptance curve, and the business guardrails to land on a recommended offer band.

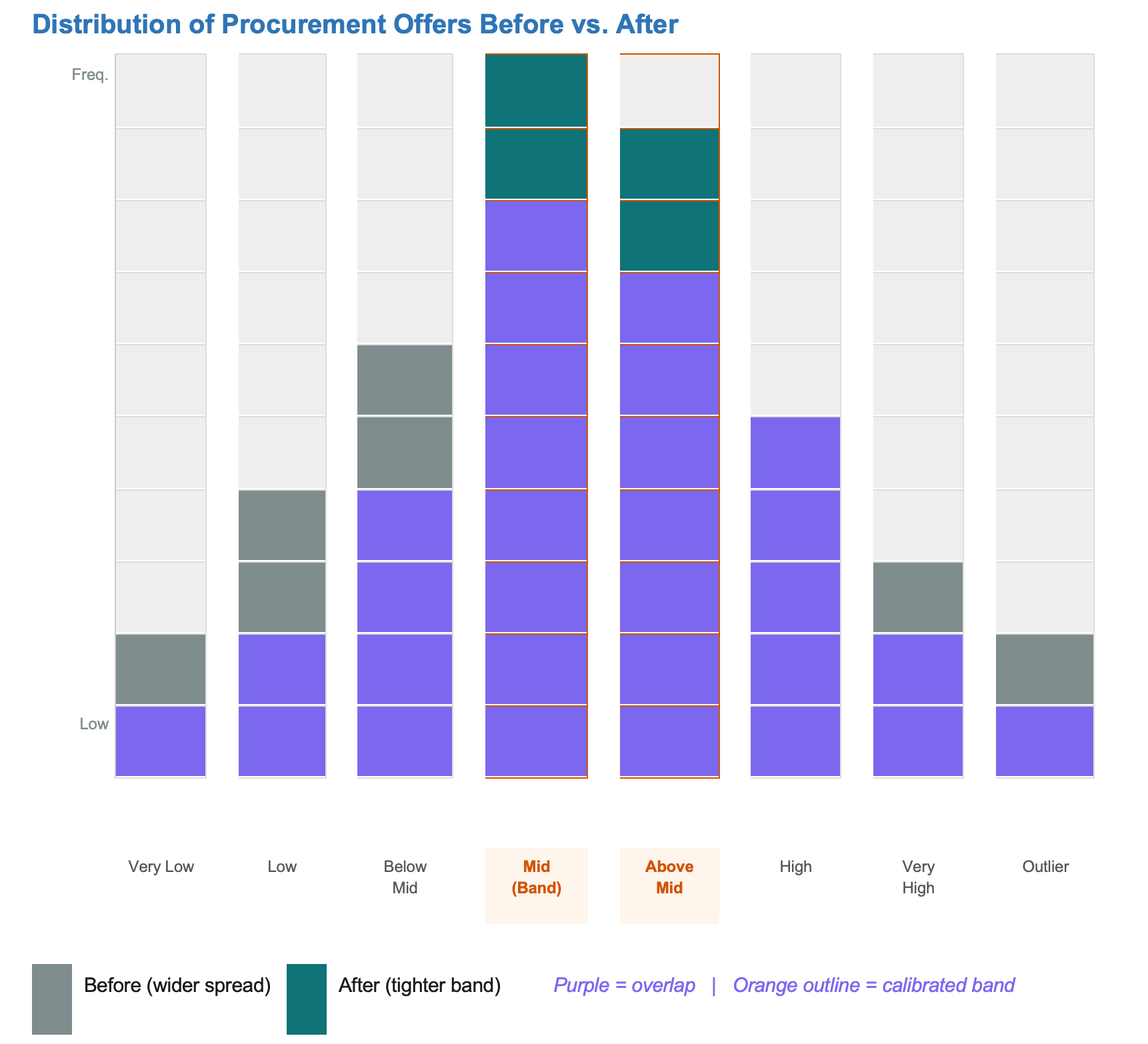

What Changed in the Data

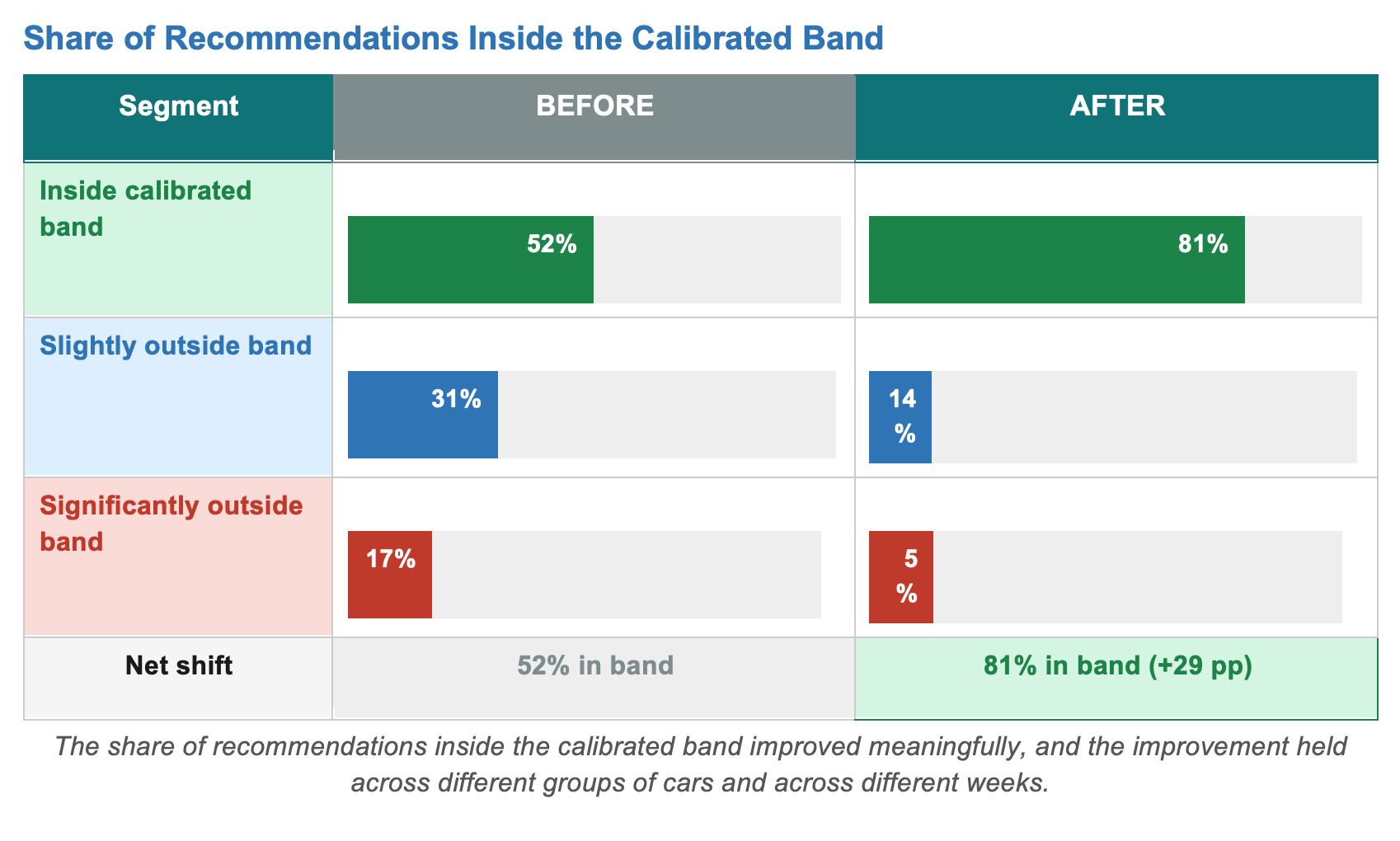

Rather than share specific numbers, we look at how the distribution of offers shifted before and after the new stack. The below patterns capture most of it -

The directional picture has been consistent since the new stack was onboarded:

- Acquisition conversion improved in the groups where the new system replaced the old approach.

- Pricing consistency across groups improved, narrowing the gap between the best and worst-priced segments.

- The time it takes to react to market shifts shortened because the monitoring loop surfaces drift earlier than the previous setup did.

- Because every offer now traces through identifiable layers, explainability improved too.

We expect the gains to keep compounding as the feedback loop matures and more groups of cars come onto the stack.

Five Things We Learned as a Team

1. Pricing is decision science, not just prediction.

A model that accurately predicts what a seller will accept is not, by itself, a pricing system. The decision layer where you take that prediction and determine what to actually do with it given everything else going on is where most of the business value lives.

2. Interpretability is a feature, not a tax.

Being able to explain a price to the pricing team, to leadership, or to a customer-facing colleague is what allows the system to be improved over time. A system that cannot be explained can only be trusted. That is a much weaker position than one that can be understood and challenged.

3. Calibration matters more than leaderboard accuracy.

A model that looks great on standard accuracy metrics but is poorly calibrated within specific groups of cars will quietly mis-price entire segments in production. We invested in calibration checks that run continuously, not just at the point when the model is first trained.

4. Business constraints belong inside the model.

Treating guardrails as first-class parts of the system, rather than corrections applied after the fact, removes a whole class of subtle failure modes and makes the system far easier to audit.

5. Build the feedback loop from day one.

A pricing system without a closed loop gets stale fast. A pricing system with one gets sharper every week. We wish we had taken this more seriously on earlier iterations.

The new procurement pricing stack is not a single model. It is a layered, monitored, continuously learning system that turns acquisition pricing from a sequence of isolated point decisions into a coherent capability.

It builds on the work that came before it on Profecto, Profundus, and the inspection pipeline that powers them and it extends that work into harder territory. Not just estimating what a car is worth, but deciding what to actually offer for it, given how similar cars have been behaving, what acceptance looks like at different price levels, and what the business needs from that acquisition.

The most useful shift for our team was a mental one. We stopped treating pricing as a prediction problem with some business rules layered on top, and started treating it as a decision problem that happens to use prediction as one of its inputs. Once that framing settled in, most of the architectural choices fell out naturally.

For a marketplace as varied and unpredictable as used cars, that is exactly the kind of compounding advantage we were after.

Behind the Journey: Acknowledging the People Who Made It Possible

Great products and impactful systems are never built in isolation; they are shaped through collaboration, persistence, and shared belief.

We would like to express our sincere appreciation to our Pricing Data Scientist, Bikash Paramanik, whose dedication, problem-solving mindset, and relentless efforts have been instrumental in bringing this initiative to its current scale and maturity.

We are equally grateful to Ishant Mehta, Yugesh Kumar and Sanyam Sharma for their valuable inputs, thoughtful discussions, and continuous support throughout the journey.

Finally, a special thanks to our CBO, Manoj Yadav, for his unwavering encouragement, strategic direction, and constant feedback, which helped steer this initiative forward at every stage.

Written collectively by the Cars24 Data Science team

Loved this article?

Hit the like button

Share this article

Spread the knowledge

More from the world of Cars24

We cut marketing spend by a third, growth still went up

A reflection on how long-term thinking and strategic bets can create meaningful MOAT

We Just Launched Women Led Car Hub. It Outperformed Every Other Hub in Delhi NCR in Month One.

Gajendra Jangid Steps Down as Co-founder: Not a Goodbye, Just a New Chapter

After 11 years of building Cars24 together, Gajendra Jangid is stepping back from his executive role. He will continue to stay involved with brand, marketing and Crashfree India.